How Uber Uses Zig

Disclaimer: I work at Uber and am partially responsible for bringing zig cc

to serious internal use. Opinions are mine, this blog post is not affiliated

with Uber.

I talked at the Zig Milan meetup about “Onboarding Zig at Uber”. This post is a little about “how Uber uses Zig”, and more about “my experience of bringing Zig to Uber”, from both technical and social aspects.

The video is here. The rest of the post is a loose transcript, with some commentary and errata.

@mo_kelione is still my temporary twitter handle from 2009.

TLDR:

- Uber uses Zig to compile its C/C++ code. Now only in the Go

Monorepo via bazel-zig-cc, with plans to

possibly expand use of

zig ccto other languages that need a C/C++ toolchain. - Main selling points of C/C++ toolchain on top of zig-cc over the alternatives: configurable versions of glibc and macOS cross-compilation.

- Uber does not have any plans to use zig-the-language yet.

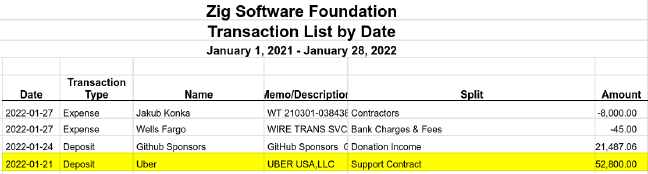

- Uber signed a support agreement with Zig Software Foundation (ZSF) to prioritize bug fixes. The contract value is disclosed in the ZSF financial reports.

- Thanks to my team, the Go Monorepo team, the Go Platform team, my director, finance, legal, and of course Zig Software Foundation for making this relationship happen. The relationship has been fruitful so far.

About Uber’s tech stack

Uber started in 2010, has clocked over 15 billion trips, and made lots of cool and innovative tech for it to happen. General-purpose “allowed” server-side languages are Go and Java, with Python and Node allowed for specific use cases (like front-end for Node and Python for data analysis/ML). C++ is used for a few low level libraries. Use of other languages in back-end code is minimal.

Our Go Monorepo is larger than Linux kernel1, and worked on by a couple of thousand engineers. In short, it’s big.

How does Uber use Zig?

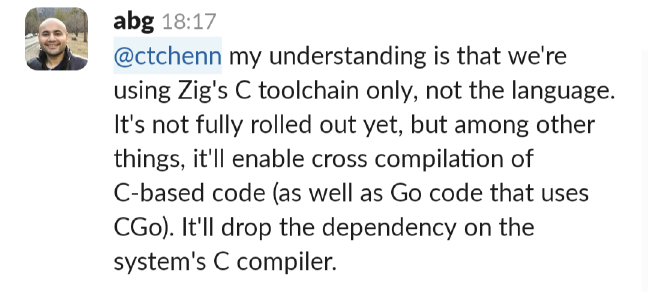

Abhinav's TLDR of the presentation.

I can’t say this better than my colleague Abhinav Gupta from the Go Platform team (a transcript is available in the “alt” attribute):

At this point of the presentation, since I explained (thanks abg!) how Uber uses Zig, I could end the talk. But you all came in for the process, so after an uncomfortable pause, I decided to tell more about it.

History

Pre-2018 Uber’s Go services lived in their separate repositories. In 20182 services started moving to Go monorepo en masse. My team was among the first wave — I still remember the complexity.

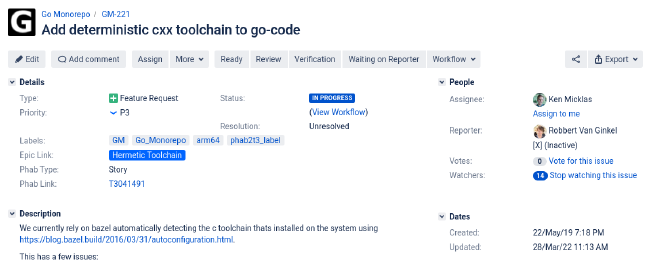

2019: asks for a hermetic toolchain

At the time, the Go monorepo already used a hermetic Go toolchain. Therefore, the Go compiler used to build the monorepo was unaffected by the compiler installed on the system, if any. Therefore, on whichever environment a Go build was running, it always used the same version of Go. Bazel docs explain this better than me.

This was created in 2019 and did not see much movement.

A C++ toolchain is a collection of programs to compile C/C++ code. It is unavoidable for some our Go code to use CGo, so it needs a C/C++ compiler. Go then links the Go and C parts to the final executable.

The C++ toolchain was not hermetic since the start of Go monorepo: Bazel would use whatever it found on the system. That meant Clang on macOS, GCC (whatever version) on Linux. Setting up a hermetic C++ toolchain in Bazel is a lot of work (think person-months for our monorepo), there was no immediate need, and it also was not painful enough to be picked up.

At this point it is important to understand the limitations of a non-hermetic C++ toolchain:

- Cannot cross-compile. So we can’t compile Linux executables on a Mac if they require CGo (which many of our services do). This was worked around by… not cross-compiling.

- CGo executables would link to a glibc version that was found on the system. That means: when upgrading the OS (multi-month effort), the build fleet must be upgraded last. Otherwise, if build host runs a newer glibc than a production host, the resulting binary will link against a newer glibc version, which is incompatible to the old one still on a production host.

- We couldn’t use new compilers, which have better optimizations, because we were running an older OS on the build fleet (backporting only the compiler, but not glibc, carries it’s own risks).

- Official binaries for newer versions of Go are built against a more recent version of GCC than some of our build machines. We had to work around this by compiling Go from source on these machines.

All of these issues were annoying, but not enough to invest into the toolchain.

2020 Dec: need musl

I was working on a non-Uber-related toy project that is built with Bazel and uses CGo. I wanted my binary to be static, but Bazel does not make that easy. I spent a couple of evenings creating a Bazel toolchain on top of musl.cc, but didn’t go far, because at the time I wasn’t able to make sense out of the Bazel’s toolchain documentation, and I didn’t find a good example to rely on.

2021 Jan: discovering zig cc

In January of 2021 I found Andrew Kelley’s blog post zig cc: a Powerful

Drop-In Replacement for GCC/Clang. I recommend reading the

article; it changed how I think about compilers (and it will help you

understand the remaining article better, because I gave the talk to a Zig

audience). To sum up the Andrew’s article, zig cc has the following

advantages:

- Fully hermetic C/C++ compiler in ~40MB tarball. This is an order of magnitude smaller than the standard Clang distributions.

- Can link against a glibc version that was provided as a command-line argument

(e.g.

-target x86_64-linux-gnu.2.28will compile for x86_64 Linux and link against glibc 2.28). - Host and target are decoupled. The setup is the same for both

linux-aarch64anddarwin-x86_64targets, regardless of the host. - Linking with musl is “just a different libc version”:

-target x86_64-linux-musl.

I started messing around with zig cc. I compiled random programs, reported

issues. I thought about making this a bazel toolchain, but

there were quite a few blocking bugs or missing features. One of them was lack

of zig ar, which Bazel relies on.

2021 Feb: asking for attention

I reported bugs to Zig. Nothing happened for a

week. I donated $50/month, expecting “the Zig folks” to prioritize what I’ve

reported. A week of silence again. And then I dropped the bomb in

#zig:libera.chat:

<motiejus> What is the protocol to "claim" the dev hours once donated?

<andrewrk> ZSF only accepts no-strings-attached donations

<andrewrk> did you get a different impression somewhere?

Oops. At the time I hoped that whoever notice the conversation would immediately forget it. Well, here it is again, more than a year later, over here, for your enjoyment.

2021 June: bazel-zig-cc and Uber’s Go monorepo

In June of 2021 Adam Bouhenguel created a working bazel-zig-cc

prototype. The basics worked, but it still lacked some

features. Andrew later implemented zig ar3, which was the last missing

piece to a truly workable bazel-zig-cc. I integrated zig ar, polished the

documentation and announced my fork of bazel-zig-cc to the Zig mailing

list. At this point it was usable for my toy project. Win!

A few weeks after the announcement I created a “WIP DIFF” for Uber’s Go monorepo: just used my onboarding instructions and naïvely submitted it to our CI. It failed almost all tests.

Onboarding bazel-zig-cc to Uber's Go monorepo.

Most of the failures were caused by dependencies on system libraries. At this point it was clear that, to truly onboard bazel-zig-cc and compile all it’s C/C++ code, there needs to be quite a lot of investment to remove the dependency on system libraries and undoing of a lot of technical debt.

2021 End: recap

- Various places at Uber would benefit from a hermetic C++ cross-compiler, but it’s not funded due to requiring a large investment and not naving enough justification.

- bazel-zig-cc kinda works, but both bazel-zig-cc and zig cc have known bugs.

- I can’t realistically implement the necessary changes or bug fixes. I tried

implementing

zig ar, a trivial front-end for LLVM’sar, and failed. - Once an issue had been identified as a Zig issue, getting attention from Zig

developers was unpredictable. Some issues got resolved within days, some took

more than 6 months, and donations din’t change

zig ccpriorities. - The monorepo-onboarding diff was simmering and waiting for it’s time.

2021 End: Uber needs a cross-compiler

I was tasked to evaluate arm64 for Uber. Evaluation details aside, I needed to compile software for linux-arm64. Lots of it! Since most of our low-level infra is in the Go monorepo, I needed a cross-compiler there first.

A business reason for a cross-compiler landed on my lap. Now now both time and

money can be invested there. Having a “WIP diff” with zig cc was a good

start, but was still very far from over: teams were not convinced it was the

right thing to do, the diff was too much of a prototype, and both zig-cc and

bazel-zig-cc needed lots of work before they could be used at any capacity at

Uber.

When onboarding such a technology in a large corporation, the most important thing to manage is risk. As Zig is a novel technology (not even 1.0!), it was truly unusual to suggest compiling all of our C and C++ code with it. We should be planning to stick with it for at least a decade. Questions were raised and evaluated with great care and scrutiny. For that I am truly grateful to the Go Monorepo team, especially Ken Micklas, for doing the work and research on this unproven prototype.

Evaluation of different compilers

Given that we now needed a cross-compiler, we had two candidates:

- grailbio/bazel-toolchain. Uses a vanilla Clang. No risk. Well understood. Obviously safe and correct solution.

- ~motiejus/bazel-zig-cc: uses

zig cc. Buggy, risky, unsafe, uncertain, used-by-nobody, but quite a tempting solution.

zig cc provides a few extra features on top of bazel-toolchain:

- configurable glibc version. With

grailbioyou would need a sysroot (basically, a chroot with the system libraries, so the programs can be linked against them), which would need to be maintained. - a working, albeit still buggy, hermetic (cross-)compiler for macOS.

We would be able to handle glibc with either, however, grailbio is unlikely

to ever have a way to compile to macOS, let alone cross-compile. Relying on the

system compiler is undesirable on developer laptops, and the Go Platform feels

that first-hand, especially during macOS upgrades.

The prospect of a hermetic toolchain for macOS targets tipped the scales

towards zig cc, with all its warts, risks and instability.

There was still another problem, one of attention: if we were considering the use of Zig in a serious capacity, we knew we will hit problems, but would be unlikely to have the expertise to solve them. How can we, as a BigCorp, de-risk the engagement question, making sure that bugs important to us are handled timely? We were sure of good intentions of ZSF: it was obvious that, if we find and report a legitimate bug, it would get fixed. But how can we put an upper bound on latency?

Money

$50 donation does not help, perhaps a large service contract would? I asked around if we could spend some money to de-risk our “cross-compiler”. Getting a green light from the management took about 10 minutes; drafting, approving and signing the contract took about 2 months.

Contract terms were roughly as follows:

- Uber reports issues to github.com/ziglang/zig and pings Loris.

- Loris assigns it to someone in ZSF.

- Hack hack hack hack hack.

- When done, Loris enters the number of hours worked on the issue.

Uber has a right to ZSF members’ time. We have no decision or voting power whatsoever with regards to Zig. We have right to offer suggestions, but they have been and will be treated just like from any other third-party bystander. We did not ask for special rights, it’s explicit in the contract, and we don’t want that.

The contract was signed, the wire transfer completed, and in 2022 January we had:

- A service contract with ZSF that promised to prioritize issues that we’ve registered.

- A commitment from Go Platform team to make our C++ toolchain cross-compiling and hermetic.

The amount of money that changed hands is public, because ZSF is a nonprofit.

2022 and beyond

In Feb 2022 the toolchain was gated behind a command-line flag

(--config=hermetic-cc). As of Feb 2022, you can invoke zig cc in Uber’s Go

Monorepo without requiring a custom patch.

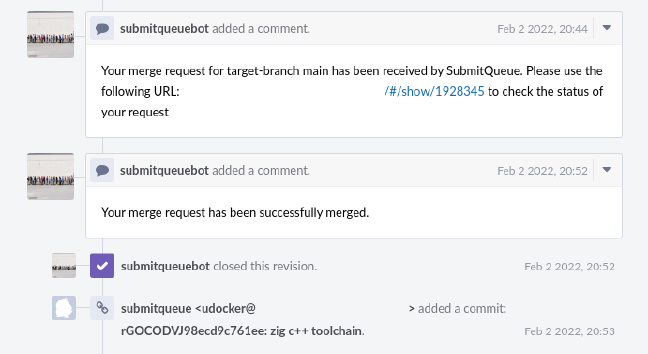

Proof of our submitqueue landed my WIP DIFF.

Timeline of 2022 so far:

- In April, around my talk in Milan, we shipped the first Debian package compiled with zig-cc to production.

- In May we have enabled

zig ccfor all our Debian packages. - In H2 we expect to compile all our cgo code with

zig ccand make the--config=hermetic-cca default. - In H2 we expect to move bazel-zig-cc under github.com/uber.

We have opened a number of issues to Zig, and, as of writing, all of them have been resolved. Some were handled by ZSF alone, some were more involved and required collaboration between ZSF, Uber and Go developers.

Summary

I started preparing for the presentation hoping I can give “a runbook” how to adopt Zig at a big company. However, there is no runbook; my effort to onboard zig-cc could have failed due to many many reasons.

Looking back, I think the most important reasons for success is a killer feature at the right time. In our case, there were two: glibc version selection without a sysroot and cross-compiling to macOS.

Appendix

I forgot to flip to the last slide in the presentation. Here it is:

{

If compilers or adopting software for other CPU architectures (and/or living in the Eastern Europe) is your thing, my team in Vilnius is hiring. Also, my sister teams in Seattle and Bay Area are hiring too. Ping me.

Credits

Many thanks Abhinav Gupta, Loris Cro and Ken Micklas for reading drafts of this.